The rapid advancement of generative artificial intelligence has fundamentally altered the global energy landscape, shifting the conversation from software efficiency to the physical limitations of our electrical grids. As part of The Global AI Infrastructure Boom: Data Center Growth, GPU Clusters, and Scalability, understanding the mechanics of Powering the AI Revolution: Grid Stability and Energy Infrastructure Needs is critical for developers, investors, and policymakers alike. Unlike traditional cloud computing, AI training and inference require sustained, high-density power loads that push existing utility frameworks to their breaking points. This shift necessitates a complete overhaul of how we think about energy generation, transmission, and on-site power management to ensure the AI boom does not stall due to an inability to plug in new hardware.

The Massive Shift in Energy Density

Traditional data centers typically operate with power densities of 5 to 10 kilowatts (kW) per rack. However, the hardware required for modern large language models (LLMs) has shattered these norms. Modern AI clusters utilize high-end hardware that demands significantly more power, often reaching 40kW to 100kW per rack. This evolution is driven by Next-Generation GPU Hardware: Powering the Future of AI Clusters, where a single server chassis can consume as much electricity as several dozen standard offices.

This surge in demand creates a “lumpy” load profile for utility providers. Unlike residential areas where power usage peaks in the evening, AI data centers operate at high “load factors,” meaning they consume massive amounts of power 24/7. This constant draw leaves little room for the grid to “rest” or perform maintenance, leading to concerns about The Hidden Cost of Intelligence: Addressing AI Energy Consumption Trends. When hundreds of thousands of GPUs are synchronized for a training run, the sudden ramp-up in power can cause frequency fluctuations on the grid, threatening stability for other users.

Grid Stability and the Challenge of “Always-On” Power

Grid stability refers to the ability of an electrical system to maintain a steady frequency and voltage despite changes in load or supply. AI workloads present a unique challenge to this stability because of their sheer scale. When Architecting GPU Clusters: The Backbone of Modern AI Hardware Infrastructure, engineers must account for the impact that thousands of H100 or Blackwell GPUs have on the local substation.

- Frequency Regulation: Large-scale AI training runs can cause “step changes” in demand. If a cluster suddenly goes offline due to a software error, the sudden drop in load can cause the grid frequency to spike, potentially damaging equipment.

- Voltage Sag: Conversely, when a massive training job starts, the sudden draw can cause local voltage to drop, leading to brownouts if the infrastructure isn’t robust enough.

- Intermittency Issues: While many AI companies aim for “Green AI,” relying solely on wind or solar is difficult because AI workloads require constant power. Balancing renewable energy with the need for 24/7 stability is one of the greatest AI Scalability Challenges today.

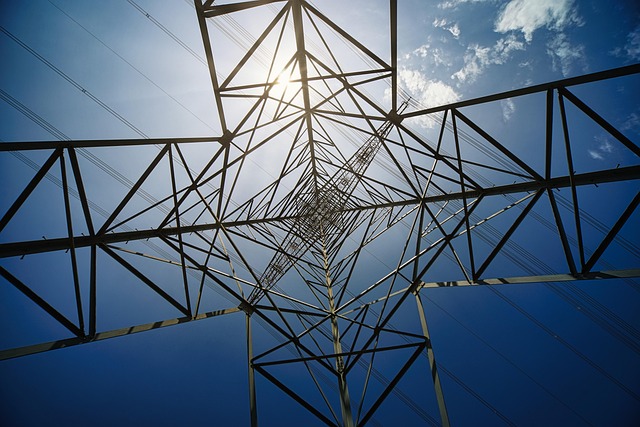

Infrastructure Needs: Beyond the Data Center Walls

To solve the energy crisis, the industry is looking beyond the server rack and toward the transformer. The current backlog for electrical transformers and high-voltage switchgear can extend to several years, creating a physical bottleneck for AI growth. Building out the necessary infrastructure requires a multi-pronged approach:

| Infrastructure Component | Role in AI Powering | Current Challenge |

|---|---|---|

| High-Voltage Substations | Stepping down power from the transmission grid to the data center. | Long lead times for construction and permitting. |

| Microgrids & Storage | Providing on-site backup and load leveling using batteries. | High capital expenditure and supply chain constraints for lithium. |

| On-Site Generation | Small Modular Reactors (SMRs) or Natural Gas turbines to bypass the grid. | Regulatory hurdles and public perception issues. |

Furthermore, managing the thermal output of these high-density clusters is inseparable from the power conversation. Effective energy management requires Advanced Cooling Solutions for AI Data Centers: Managing Heat and Energy, as traditional air cooling is no longer sufficient for the 100kW+ rack densities of the AI era.

Case Studies: Innovations in Energy Procurement

Several industry leaders are already taking drastic steps to secure their energy futures, providing a blueprint for the “Powering the AI Revolution: Grid Stability and Energy Infrastructure Needs” framework.

Case Study 1: The Nuclear Revival (Microsoft & Constellation Energy)

In a landmark deal, Microsoft partnered with Constellation Energy to restart a dormant reactor at Three Mile Island. This move highlights a growing trend: AI giants are no longer content with buying “green credits”; they want dedicated, carbon-free, baseload power that can run 24/7. This “behind-the-meter” strategy allows the data center to pull power directly from the source, reducing the strain on the public transmission grid.

Case Study 2: Northern Virginia’s Grid Bottlenecks

Northern Virginia, the data center capital of the world, has faced significant challenges. In recent years, Dominion Energy warned that the transmission infrastructure could not keep up with the pace of data center development. This led to a temporary halt in new connections, forcing developers to look into on-site natural gas generation and battery storage to bridge the gap while new high-voltage lines are constructed. This serves as a cautionary tale for the Macroeconomics of AI Data Centers.

Actionable Insights for Scaling AI Infrastructure

For organizations looking to navigate the complexities of power and grid stability, the following strategies are essential:

- Implement Software-Based Power Capping: Use software to throttle GPU performance during peak grid demand periods. This is a key part of Maximizing GPU Efficiency: Software Strategies for AI Infrastructure Optimization.

- Invest in Energy Storage: Battery Energy Storage Systems (BESS) can act as a buffer, soaking up excess renewable energy and discharging it when the AI cluster hits peak load.

- Geographic Diversification: Instead of building in established hubs like Ashburn or Dublin, look toward regions with surplus energy capacity, even if they are geographically remote. This often involves Distributed AI Training: Overcoming Scalability Bottlenecks across multiple locations.

- Strategic Investment: Investors should look toward the “pick and shovel” plays in the energy sector. For more details, see Investing in AI Infrastructure: Top Stocks and ETFs Driving Data Center Growth.

Conclusion

The success of the AI era is inextricably linked to our ability to modernize the electrical grid and innovate in energy production. “Powering the AI Revolution: Grid Stability and Energy Infrastructure Needs” is not just a technical challenge; it is a fundamental requirement for the continued expansion of LLMs and autonomous systems. By integrating advanced cooling, on-site generation, and intelligent software management, the industry can overcome these bottlenecks. To see how these energy needs fit into the larger picture of hardware and scaling, explore our comprehensive guide on The Global AI Infrastructure Boom: Data Center Growth, GPU Clusters, and Scalability.

Frequently Asked Questions

How does AI impact grid stability more than traditional cloud computing?

AI workloads require much higher power density and operate at a constant, high load factor. This lack of variability, combined with the massive “step changes” in power draw during training cycles, puts significantly more stress on frequency regulation and transformer capacity than traditional web hosting.

What is “behind-the-meter” power, and why is it popular for AI?

Behind-the-meter power involves generating electricity on-site or connecting directly to a power plant without using the public transmission grid. This allows AI data centers to bypass grid congestion and ensures a dedicated power supply that isn’t subject to public utility outages.

Are renewables enough to power the AI revolution?

While wind and solar are crucial, their intermittency makes them difficult to use as the sole source for AI clusters that require 24/7 “always-on” power. Most providers use a mix of renewables paired with battery storage or carbon-free baseload sources like nuclear energy.

How does energy infrastructure affect the cost of training an AI model?

Energy is one of the largest operational expenses in AI. In regions with high electricity costs or grid constraints, the cost of training can skyrocket. Infrastructure bottlenecks also increase “time-to-market,” which has significant macroeconomic implications for AI companies.

Can software optimizations reduce the need for new energy infrastructure?

Yes, software can help by implementing power-aware scheduling and distributed training across different regions. However, while software improves efficiency, the sheer growth in AI model size usually outpaces these gains, still requiring massive physical infrastructure investments.

What role do Small Modular Reactors (SMRs) play in AI infrastructure?

SMRs are seen as a potential “holy grail” for AI power because they provide carbon-free, constant energy in a compact footprint. Many data center operators are investing in SMR technology to provide dedicated power directly to future GPU clusters.

How does this topic connect to the broader AI infrastructure boom?

Energy infrastructure is the “physical floor” of the AI boom. Without stable power, even the most advanced GPU clusters and data centers cannot function, making grid stability the most critical variable in the Global AI Infrastructure Boom.